A Study Reveals That 24+ Million Individuals Visit Websites That Allow the Use of AI to Undress Women in Pictures.

A Study Reveals That 24+ Million Individuals Visit Websites That Allow the Use of AI to Undress Women in Pictures.

A surprising 24 million individuals accessed these undressing women websites in September alone, according to Graphika, a social network analysis company. This data demonstrates a concerning increase in non-consensual pornography, which is being fueled by developments in artificial intelligence. The specifications are as follows.

A rise in the popularity of apps and websites that use AI to remove clothing from women in photographs has alarmed privacy advocates and researchers, according to a Bloomberg report. A staggering 24 million people visited these undressing websites in September alone, according to Graphika, a social network analysis company. This data demonstrates a concerning increase in non-consensual pornography, which is being fueled by developments in artificial intelligence.

The proliferation of links promoting undressing applications on platforms such as X and Reddit has increased by more than 2,400 percent since the start of the year, as the so-called “nudify” services have begun utilizing these networks for marketing purposes. These services utilize artificial intelligence technology to perform digital undressing, with a primary emphasis on women. Social media images are frequently extracted without the assent or knowledge of the subjects, which creates significant legal and ethical difficulties.

The concerning phenomenon also encompasses the possibility of harassment, as certain advertisements imply that clients may generate explicit images and transmit them to the individual who is digitally undressed. Google, in reaction, has declared its stance on sexually explicit content in advertisements and is proactively eliminating objectionable material. X and Reddit, nevertheless, have yet to provide responses to requests for commentary.

Privacy experts are raising concerns regarding the growing availability of deepfake pornography, which is being enabled by developments in artificial intelligence technology. Eva Galperin, director of cybersecurity at the Electronic Frontier Foundation, observes a shift toward common targets, such as high school and college students, employing these technologies. A considerable number of victims may go uninformed regarding these manipulated images, and those who do encounter obstacles when attempting to involve law enforcement or pursue legal recourse.

At present, notwithstanding the escalating apprehensions, the United States lacks a federal statute that expressly forbids the production of deepfake pornography. A child psychiatrist was recently convicted in North Carolina of using undressing apps on patient images; this is the first prosecution under a law that prohibits child sexual abuse content from the deepfake generation. The defendant was sentenced to forty years in prison.

As a consequence of the concerning pattern, Meta Platforms Inc. and TikTok have implemented measures to obstruct keywords that are linked to these undressing applications. Users are cautioned by TikTok that the term “undress” might be linked to content that contravenes its guidelines. Meta Platforms Inc. declined to offer additional commentary regarding the platform’s operations.

The ethical and legal complexities presented by deepfake pornography highlight the critical nature of establishing comprehensive regulations to safeguard individuals against the detrimental and non-consensual utilization of content generated by artificial intelligence (AI) as technology advances.

Key Points of This Sizzling News

- The 24 million visitors that AI undressing websites receive exacerbate privacy concerns.

- The use of AI by deepfake applications generates legal and privacy concerns.

- Keywords have been prohibited on TikTok and Meta in response to mounting ethical concerns.

Rising Instances of AI Deepfake Cases

We have also seen that there are many instances have occurred so far where AI has shown its potential by delivering us images and videos of some dignified personalities doing the things that they are not intended to do, such as recently PMO India Mr. Narendra Modi has also urged awareness related to his viral singing video while playing a guitar. In another event, PM Modi was also seen dancing at a Garba event that was claimed fake by the PM himself during a rally.

Moreover, the Hon’ble PM also stated awareness regarding these surging Deepfake Video Cases in the same rally.

Similarly, the world has also witnessed the deepfake video of South sensation Rashmika Mandana in her Deepfake Video showcasing herself in a body-revealing as well as black body-hugging yoga suit in an elevator which was ultimately proven to be forged by deepfake techniques. Later on, the original video came into the limelight featuring some Indian-origin British Instagram influencer Zara Patel.

In the wake of the ongoing surge of such deepfake videos of notified actresses, heartthrob Bollywood heroines Katrina Kaif and Alia Bhatt also fall victim to such techniques becoming a point to talk about overnight.

Just two days ago, Indian Business tycoon Mr. Ratan Tata uploaded a story on his Instagram account reacting to his viral deepfake video suggesting to invest money for quick 100% results in his recent project with the help of his manager Sona Agarwal to the general public that is proven to be forged.

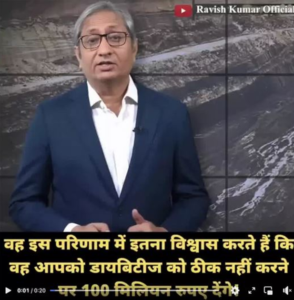

In the same vein, just 3 days ago, senior journalist and author Ravish Kumar notified the common public in the light of a viral deep fake video in which he suggested purchasing a certain medicinal remedy in order to curb diabetes. He made a video to clarify his stand on not purchasing any anti-diabetic medicine showcased in that viral video portraying him recommending a specialized medicine to curb diabetic symptoms and issues.

About The Author:

Yogesh Naager is a content marketer who specializes in the cybersecurity and B2B space. Besides writing for the News4Hackers blog, he’s also written for brands including CollegeDunia, Utsav Fashion, and NASSCOM. Naager entered the field of content in an unusual way. He began his career as an insurance sales executive, where he developed an interest in simplifying difficult concepts. He also combines this interest with a love of narrative, which makes him a good writer in the cybersecurity field. In the bottom line, he frequently writes for Craw Security.

READ MORE ARTICLE HERE

The Government of India Has Blocked Over 100 Fake Job Providing Websites

7 Cool and Useful Things To Do With Your Flipper Zero

Department of Telecommunications of India Introduces an AI-Driven Platform to Fight Mobile Number Frauds